This is part one of a two-part topic, where I talk about what the heck COPPA is. Part two, where I talk about YouTube alternatives, is coming soon.

At SparkleCon 2020, I gave a talk entitled “Farewell to YouTube: Alternatives in the wake of COPPA” at which I promised to show my work. I’ve done a lot of research on this complicated topic, and I like to share, so here we go.

Note that I am not a lawyer and this isn’t legal advice. I’m just a very interested party who wanted to understand the situation as best I could.

And turns out it’s super complicated, so understanding to the best of one’s ability is still kinda relative.

Whattf is COPPA?

COPPA stands for the Children’s Online Privacy Protection Act (sometimes “Rule,” but I guess they didn’t like COPPR) which was passed in 1998. It’s long, and I will only quote very small bits at you, but here’s the summary:

COPPA imposes certain requirements on operators of websites or online services directed to children under 13 years of age, and on operators of other websites or online services that have actual knowledge that they are collecting personal information online from a child under 13 years of age.

https://www.ftc.gov/enforcement/rules/rulemaking-regulatory-reform-proceedings/childrens-online-privacy-protection-rule

Basically, it says that you can’t gather personally-identifying information about kids without parental consent.

You can’t gather it actively or passively, and if you do wind up with information about children, you have to take active measures to either delete that information right away and notify parents of information you have or want to obtain and get parental consent.

They get detailed and specific about all of the ways that you can try to get verifiable parental consent. It’s not a small task.

Personally identifying information includes a lot of things you’d normally expect: first and last name, address, phone number, photos/videos/audio, etc. These were included from the beginning and make obvious sense. We don’t want children putting their address online and making themselves vulnerable to predators and such.

Personally identifying information also includes, since the FTC made updates in 2013, persistent identifiers, cookies, IP addresses, and unique device identifiers. The internet is a different place now and so much of what you do is tied into cookies. Turns out just about everything YouTube does is tied into cookies. The algorithm (all hail the mighty algorithm) is based on persistent identifiers telling it what you’ve watched and what others have watched. Personalized advertising is based on it too.

So yeah, YouTube.

In 2018, a group of child advocacy groups filed a complaint with the FTC (which is in charge of enforcing COPPA), saying that YouTube/Google was violating the rules. There was an investigation and eventual lawsuit, because evidence.

YouTube: “Look, COPPA doesn’t apply to us, you have to be 13 to use our site and make an account. There aren’t kids here, don’t look at the baby shark behind the curtain.”

Also YouTube: “Hey advertisers, we have SO MANY young kids on our platform and you should TOTALLY give us money.”

Like it was in their marketing materials.

So obviously, the FTC sued them, and they eventually settled for $170 million plus agreeing to make certain changes to the platform. Note that the FTC didn’t tell them what exactly they needed to do, that’s not their job and they really don’t understand the internet anyway (according to Kreekcraft who visited with them, they refer to individual channels as websites), they just need to make sure there aren’t violations of the rules.

The FTC demanded that YouTube

- Make a way for content creators to be able to identify which videos are directed to kids

- Notify channel owners that their content is subject to COPPA and that the FTC will evaluate their content, potentially fining creators who misrepresent their content up to $42,530 per video

- Provide training to employees who work with creators

So YouTube made changes (announced in September 2019, detailed in November, into effect in January 2020). There are two big parts of it that impact creators.

First, there’s the new “Made for Kids” category. Every creator needs to specify whether each video is directed to kids or not. Second, they announced that in order to help people categorize their videos, they would have machine learning systems go through the site and automatically mark videos that it thinks are Made for Kids.

This isn’t whether the video is kid-friendly or intended for children, but basically if kids are likely to be watching.

Gee, that’s not vague at all. But wait, it gets better.

To be fair to the FTC, intentionally for-kids content varies widely and it can’t be easy to quantify it, but in order to make their rules flexible, they also made them really frackin vague.

In determining whether a Web site or online service, or a portion thereof, is directed to children, the Commission will consider its subject matter, visual content, use of animated characters or child-oriented activities and incentives, music or other audio content, age of models, presence of child celebrities or celebrities who appeal to children, … The Commission will also consider competent and reliable empirical evidence regarding audience composition, and evidence regarding the intended audience.

https://www.ecfr.gov/cgi-bin/text-idx?SID=4939e77c77a1a1a08c1cbf905fc4b409&node=16%3A1.0.1.3.36&rgn=div5#se16.1.312_12

But wait, Barb! There are cartoons for adults! And everyone likes bright colors, and there are kids in lots of videos that aren’t made for kids, and what do they mean by “celebrities who appeal to children??”

The going belief is that they’re not going to crack down on things that are clearly not directed to kids but have some of these things (such as adult cartoons), but this wording is made for people trying to keep their options open. It’s not remotely helpful for people trying to ensure that they aren’t breaking the law. For example, child-oriented activities could include video games and arts & crafts that appeal to all ages. It is confusing and upsetting to content creators effected by it, and the threat of a lawsuit is causing a lot of creators to play it safe and mark their videos Made for Kids if they’re uncertain.

The effects on creators vary from crummy to career-changing

Like I said, the law isn’t about making the content safe for children, it’s about not collecting information about kids in places (videos) where kids are likely to be. So YouTube decreed that a video that is marked “Made for Kids” will lose personalized advertising, comments, notifications, recommendations, playlists, and community tabs (for entirely Made for Kids channels). Anything that could be construed as gathering information.

This is where the YouTube community started freaking out.

Yes, I know YouTubers are prone to drama, but it’s not all overreacting. YouTube provides video hosting, interaction/discoverability, and the potential for monetization. Let me lay out the implications of the features YouTube is pulling:

Contextualized advertising is what we’re used to from TV. If you remember TV. The stations don’t know exactly who is watching, just the demographic that’s being targeted, so they base their ads on the context of the show. Personalized advertising is based on the user’s past behavior and is way more effective than contextual advertising. As a result, advertisers will pay a lot more for personalized than contextual. But if you can’t gather information about any viewers of a particular video, you can only show contextualized ads, and the ad revenue of a video will be lower. Estimates have it at 1/10th as much.

For content creators who do this for a living, the possibility of losing 90% of their ad revenue income from some or all of their videos is terrifying.

Now, not all content creators get ad revenue. You have to have a certain amount of subscribers and watch time to even qualify. But there are other effects of this that apply. Losing comments and community tabs means you lose that link to your audience, which is one of the core ideas of internet video; content is effected by those watching it way more directly than in productions like TV and movies which can take months or years to make.

Losing notifications and recommendations is basically unplugging a video from the algorithm (all hail). It means that any videos that are recommended to you after watching a Made for Kids video will be generic, and it means that Made for Kids videos will probably be limited in where they’re recommended (it’s unclear what YouTube is actually intending here). If losing that connection to your audience isn’t bad enough, it’s now harder for your audience to find you.

YouTube is the one gathering information. By the rules of the FTC, people “on whose behalf” the information is gathered are also liable, but content creators on the platform, while they have access to some of the data via analytics, really have no control over it.

YouTube uses cookies the instant your foot hits their front door. They keep track of everywhere you go, whether you’re signed in or not. The information they gather plays into what they recommend to you, what the algorithm learns about content in general, and it plays into the personal advertising you get at the start of all of those videos you watch. (Assuming you don’t have YouTube Red or ad blocking.)

There’s room for some complexity

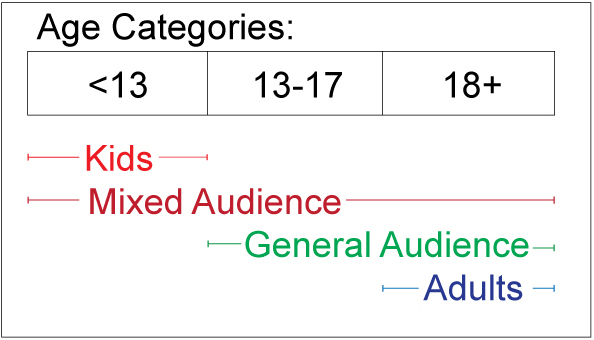

Interesting thing is that COPPA understands that there are grey areas in terms of audiences and has exceptions for them. There’s a whole lot of confusion about this, but my best understanding is as follows:

Kids content is directed exclusively at people under the age of 13, mixed audience is made to appeal to kids and older people, general audience isn’t meant for kids under the age of 13 at all, but can include teens, and adults is, well, for adults.

Content that’s meant for a general audience doesn’t have to worry about COPPA, because it’s aimed at teens and above. Mixed audience does have to worry about COPPA, because kids are part of the audience. Turns out, there are provisions for gathering information on mixed audience content, you just have to take steps to make sure you aren’t gathering personal information about the kids in your audience without permission.

But since YouTube has put the onus of all of this on the content, not on the viewers of the content, they can’t keep track of who is who, and thus “mixed audience” content has to be treated the same in their system as “Made for Kids” content.

YouTube made a choice

Could YouTube have found a different solution? Definitely. They would probably have had to make changes in managing users, which would be difficult and possibly expensive. They track everything in order to make money; in fairness, they do have to pay for the free-to-use platform somehow. But they’re also losing money from the reduced advertising on newly Made for Kids videos, though the algorithm’s changed relationship with them those means they might not be seen as much anyway. They were in an unideal situation (of their own making) and decided that their best way forward was to throw the content creators under the bus.

There is a lot of speculation about how this will change the tone and content on the platform, how it will silence or push out good quality content that is actually safe for kids, and reward content that is more abrasive and intentionally mature. It sounds a little doomsday, but a lot of good content has already been removed, made private, or tagged itself into obscurity.

What is likely to happen at this point?

The FTC was obviously bombarded by comments (170,000 of them) all of which they need to review in the coming months, and which may prompt additional changes to COPPA. At the end of the day, the FTC is unlikely to go after most YouTubers, particularly those who are not big companies. They have limited budget and time, and if you look at their past lawsuits and comments they have made since the announcement, they aren’t interested in edge cases, they’re looking to go after clear-cut violations. Still, that’s no guarantee for the craft kit reviewers or the video game streamers. The law could/probably will change again, the people at the FTC will definitely change again.

The thing that is (or should be) more of a concern for YouTubers is the impact of the machine-learning systems that are automatically marking videos Made for Kids, and how that will effect the algorithm. Discoverability is super important, and I personally am not a fan of putting hard work into creating content and then throwing it into a black hole.

There are three players in this game: the user, the platform, and the content. COPPA is about preventing the platform from being irresponsible collecting information about the user, and yet YouTube, the platform, has set the onus and penalties for this on the content.

As a result, I’m leaving YouTube. I don’t like that they knowingly exploited kids and broke the law, I don’t like how they chose to enact change on the site, and, after having been thrown under the bus by them twice now, I don’t trust that they won’t do it again.

This doesn’t mean I’ll stop making content. It just means my platform is changing. I’ve done a fair amount of research into alternatives that provide the best replacements for the different qualities of YouTube, and I’ll share it with you soon, in part two. Follow me on Twitter and Instagram, and join my mailing list if you want to make sure to catch the next part.

This is confusing and complicated and the information about it is complex (the 2013 amendment is 43 packed pages long with detailed footnotes). Here are some of the most useful resources I’ve found:

Lawyers talking about it on YouTube:

From the FTC:

- FTC – Complying with COPPA FAQs

- YouTube Channel Owners, is your content “directed to children?”

- General audience, teen, and mixed-audience sites or services

From Google/YouTube: